The AI Content Pipeline: How I Publish 3x a Week Without a Content Team

- 17 minutes readMost technical professionals have the same problem. You have ideas. Good ones. You see patterns in your work, learn things worth sharing, form opinions backed by experience. But the distance between “I should write about that” and a published post is enormous. Research takes hours. Writing takes more. Editing, formatting, generating visuals, publishing, promoting — each step is a tax on your time. So you don’t publish. Or you publish once a quarter, when guilt finally outweighs friction.

I used to be in that camp. Now I publish three times a week. Not because I write faster. Because I built a pipeline.

This post is about that pipeline. Not the implementation details — those come later. This is about the architecture of content creation when AI agents handle the 80% that isn’t the creative core.

The Content Creation Bottleneck

The blank page. Most technical professionals never get past it — not because they lack ideas, but because the pipeline from idea to published post has too many steps.

Let’s be honest about where time goes when you write a technical post.

The actual writing — forming your argument, choosing your words, deciding what matters — takes maybe 20% of the total effort. The other 80% is everything around it:

- Research: Verifying claims against official documentation. Checking that the API you’re referencing still works that way. Finding the paper that supports your argument.

- Structure: Organizing sections. Making sure the narrative flows. Deciding what to cut.

- Review: Reading it again with fresh eyes. Catching logical gaps. Asking “would this make sense to someone who doesn’t have my context?”

- Visuals: Creating diagrams that actually explain the architecture. Generating cover images. Formatting code blocks.

- Publishing: Converting from your writing tool to your blog format. Managing assets. Deploying.

- Distribution: Writing a LinkedIn teaser that hooks people. Scheduling. Cross-posting.

Each step is individually manageable. Together, they’re a wall. And the wall is why most people’s “I should write about that” list grows forever while their published post count stays flat.

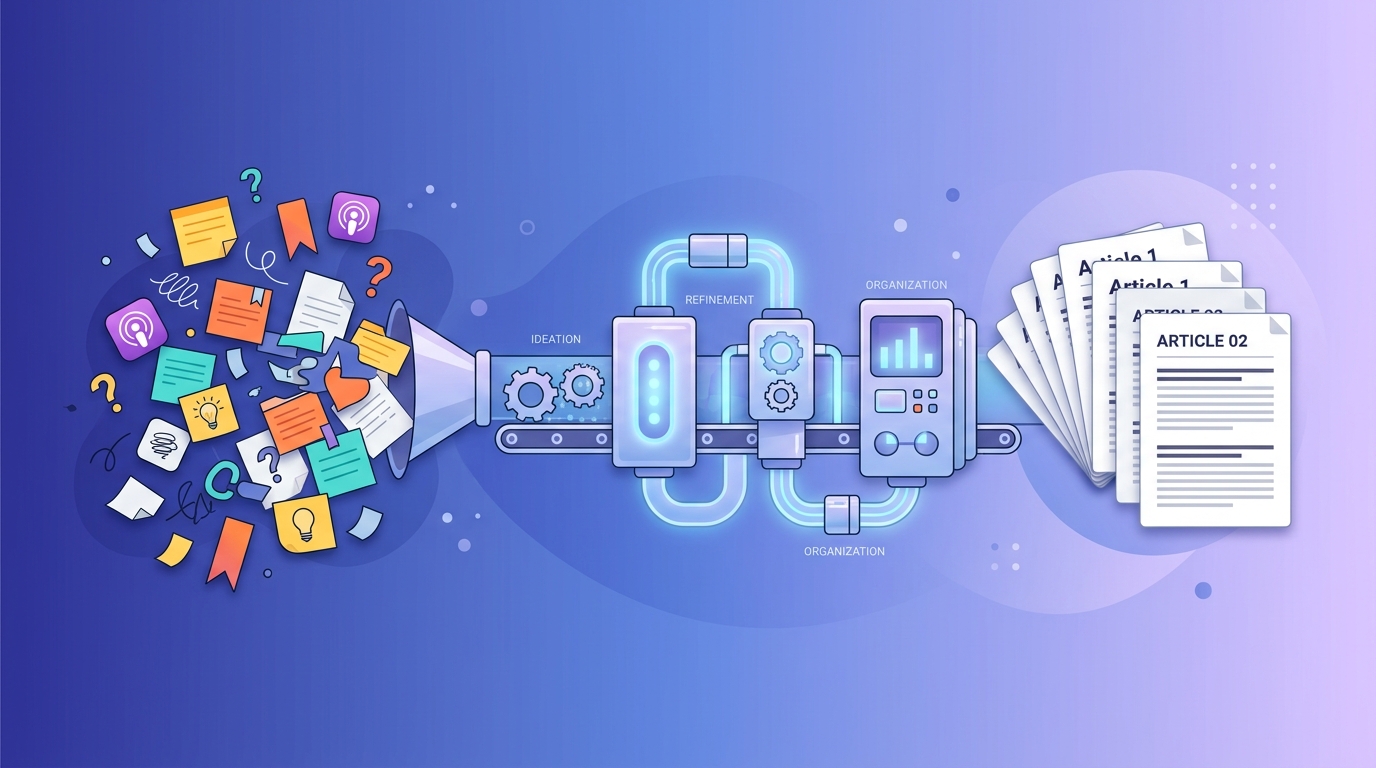

The Pipeline at a Glance

From scattered inputs to published posts — the pipeline handles the transformation.

The pipeline has five phases. Each phase has a clear division of labor between human judgment and agent execution.

| Phase | What Happens | Human Role | Agent Role |

|---|---|---|---|

| Ingest | Raw material flows in, patterns emerge | Curate sources, pick the angle | Monitor feeds, capture signals, surface connections |

| Research | Deep-dive against primary sources | Direct the investigation | Fetch, verify, cross-reference |

| Collaborative Writing | The post takes shape | Write the draft, make editorial decisions | Assist, challenge, restructure |

| Editing | Quality assurance and visuals | Final judgment calls | QA checks, diagram rendering, fact-checking |

| Publishing | Deploy and distribute | Confirm before going live | Build, deploy, generate teasers, track analytics |

This is not a waterfall. At any point, you can loop back to an earlier phase. Research reveals your thesis is wrong — back to Ingest for a new angle. Review surfaces a logical gap — back to Research for more evidence. QA catches an unsupported claim — back to Collaborative Writing to fix it. The phases describe the general flow, but the actual process is iterative. Most posts loop through Research → Writing → Review two or three times before reaching Editing.

The pipeline flows left to right but loops back freely. Most posts iterate between Research, Writing, and Review multiple times.

The pattern is consistent: agents handle volume and mechanics, humans handle judgment and creativity. Let’s walk through each phase.

Phase 1: Ingest

Ideas don’t come from staring at a blank page. They come from the work you’re already doing. The ingestion phase has two layers.

Continuous feeds and ad-hoc captures accumulate in a knowledge base. The agent surfaces patterns; the human picks the angle.

Layer 1: Continuous Feeds

The foundation is a set of trusted sources that the agent monitors automatically. I configure these once — podcast channels, technical blogs, YouTube channels, newsletter feeds — and the agent checks for new content on a regular cadence.

When new content arrives, the agent doesn’t just bookmark it. It downloads transcripts, extracts key arguments, identifies themes, and files everything in a searchable knowledge base with tags and connections to existing notes. Over time, this builds a searchable backlog of ideas that I never had to manually curate.

The key word is trusted. I’m deliberate about which sources feed the pipeline. Not everything on the internet deserves a slot. The sources I choose represent voices I respect, topics I care about, and perspectives that challenge my thinking.

Layer 2: Ad-Hoc Captures

On top of the continuous feeds, I capture individual items as I encounter them in daily work:

- A topic or content idea — something I want to explore, triggered by a conversation or a pattern I noticed

- An interesting post someone shared in a team channel or on social media

- A customer question that reveals a common misconception worth addressing

- A conference talk that connects two ideas I hadn’t linked before

The capture mechanism is lightweight — I forward an email to myself, drop a note, or just tell the agent “add this to the reading list.” The agent fetches the content, summarizes it, and files it alongside the continuous feed items.

From Inputs to Ideas

Raw inputs aren’t ideas. An article about a new vulnerability isn’t a blog post. A customer question about container security isn’t a narrative. The creative leap — connecting dots, finding the angle, identifying what’s worth saying — is fundamentally human.

But agents help you see connections you’d miss. When I review my accumulated inputs, the agent surfaces patterns: “You’ve saved three articles about AI-driven vulnerability discovery this week. Your last meeting included a question about automated security scanning. There’s a thread in a security-focused channel about the same topic.” That pattern recognition doesn’t write the post. It tells me where the energy is.

The human decision is: what’s my angle? What do I believe about this topic that’s worth arguing? What would I want to read?

For the 732-byte wake-up call post, the inputs were a YouTube video about the Copy Fail exploit, Anthropic’s Mythos cybersecurity assessment, and a Kubernetes security analysis. The angle — that these two events arriving simultaneously signal a fundamental shift in the security equilibrium — was mine. The agent didn’t generate that thesis. But it made sure all three inputs were in front of me at the same time.

Phase 2: Research

Once I have a thesis, I need evidence. For a technical post, that means official documentation, research papers, source code, and expert analysis. Doing this manually takes hours. With agents, it takes minutes.

The agent verifies claims against a trust hierarchy of sources and flags contradictions for human resolution.

The research agent works against a trust hierarchy:

- Official documentation — AWS docs, kernel docs, RFC specs. The authoritative source.

- Primary sources — The actual research paper, the actual CVE, the actual commit.

- Expert analysis — Blog posts from recognized experts, conference talks, peer-reviewed commentary.

- Community signals — Forum discussions, Stack Overflow, team chat threads. Useful for “is this a known issue?” but not for factual claims.

The agent doesn’t just fetch links. It reads the content, extracts relevant sections, cross-references claims, and flags contradictions. The hierarchy isn’t about infallibility — it’s about default authority. When sources contradict each other, the agent flags the conflict rather than silently picking a winner. The human resolves it.

If I write “AF_ALG ships enabled in every mainstream distribution,” the agent verifies that against the actual kernel configs. If I claim “Mythos found a 27-year-old bug in OpenBSD,” the agent checks the Anthropic assessment for the exact wording.

This verification step is non-negotiable. AI agents are confident. They’ll present plausible-sounding claims that are subtly wrong. The research stage isn’t “ask the agent what’s true.” It’s “tell the agent to verify what I’m claiming against primary sources.”

Phase 3: Collaborative Writing

This phase has two stages that alternate: drafting and review.

The human writes, the agent reviews systematically, and the cycle repeats 2-3 times before moving to editing.

Drafting

Here’s where the human does the actual work.

I write the first draft. The core argument, the narrative structure, the voice — that’s mine. I write in markdown, with no formatting concerns. Just the argument.

The agent’s role during drafting is limited to what I ask for: “expand this section with the technical details from the research,” “add the code example we found,” “restructure these three paragraphs — the logic flows better if I lead with the impact.”

The key discipline: the agent assists the draft. It doesn’t generate it. The moment you let an agent write your first draft, you lose your voice. And voice is the only thing that distinguishes your post from the ten thousand other posts about the same topic.

Review and Discussion

This is the stage most solo writers skip entirely. You can’t review your own work effectively. You’re too close to it. You know what you meant, so you read what you meant instead of what you wrote.

An AI agent makes a surprisingly good sparring partner. Not because it has opinions — it doesn’t. But because it can systematically check things a human reviewer would catch:

- Logical gaps: “You claim X in paragraph 3 but your evidence in paragraph 7 actually supports Y. Which do you mean?”

- Audience mismatch: “This section assumes the reader knows what scatter-gather lists are, but your intro targets cloud practitioners, not kernel developers.”

- Unsupported claims: “You say ‘most organizations’ but cite no data. Can you quantify or soften?”

- Structural issues: “Sections 4 and 6 make the same point. Merge or differentiate.”

I treat this as a conversation. The agent raises issues. I decide which ones matter. Sometimes the agent is wrong — it flags something as unclear that’s intentionally provocative. Sometimes it catches a genuine blind spot. The back-and-forth typically takes 2-3 rounds and catches things I’d have missed until after publishing.

Phase 4: Editing

After the collaborative writing phase, the agent runs systematic quality checks. This isn’t creative — it’s mechanical, which makes it perfect for automation.

Automated QA (challenging questions, fact-checking, consistency) and visual generation feed into a final human judgment call.

Automated Quality Assurance

- Challenging questions: The agent generates 5-10 questions a skeptical reader might ask. “What about serverless environments — does this exploit apply?” “You recommend custom seccomp profiles, but what’s the operational cost?” If I can’t answer them, the post has a gap.

- FAQ generation: Based on the content, the agent generates a FAQ that tests whether the post actually explains what it claims to explain. If the FAQ reveals that a key concept is never defined, I add a definition.

- Fact-checking: Every factual claim is verified against the sources cited. Every link is checked. Every code block is validated for syntax.

- Consistency check: Numbers in the summary match numbers in the body. The title promises what the content delivers.

This stage catches the embarrassing errors — the broken link, the wrong CVE number, the claim that contradicts your own source. The kind of mistakes that erode trust and that manual review misses because you’ve read the post six times and your brain autocorrects.

Visuals

Technical posts need diagrams. Architecture flows, comparison tables, process diagrams — they break up walls of text and explain relationships that prose struggles with.

I describe what I need in natural language: “Show the normal AEAD flow vs. the exploit flow, with the splice() injection highlighted in red.” The agent generates Mermaid diagram code, renders it to SVG, checks that the colors have sufficient contrast, and places it in the post with an appropriate caption.

For cover images, the agent generates them from prompts derived from the post’s theme. Not stock photos. Custom visuals that match the content.

The human judgment here is editorial: does this diagram actually clarify the concept, or is it visual noise? Does the cover image set the right tone? But the production work — the rendering, the formatting, the asset management — is fully automated.

Phase 5: Publishing

The final phase has two stages: deployment and distribution.

The agent handles deployment mechanics, the human confirms before going live, then distribution and analytics close the feedback loop.

Deployment

Converting a markdown draft into a published blog post involves more steps than you’d think:

- Convert frontmatter from your writing tool’s format to your blog engine’s format

- Copy and rename image assets to the right directories

- Pre-render any dynamic content (Mermaid diagrams) to static assets

- Build the site

- Deploy to production

- Verify the deployment

Each step is trivial. Together, they’re 15-20 minutes of mechanical work that you do every single time you publish. The agent handles all of it. I say “publish this” and the pipeline runs: convert, copy, build, deploy, verify.

The semi-automated part is intentional. The agent prepares everything and presents it for review. I confirm. Then it deploys. There’s a human gate before anything goes live, but the human isn’t doing the mechanical work.

Distribution

A published post that nobody reads is a journal entry. Distribution is where the post finds its audience.

For LinkedIn, the agent generates a teaser — not the full post, but a hook that drives traffic to the website. The teaser matches my voice (the agent has studied dozens of my previous posts), includes the key highlights, mentions code examples if the post has them (because that audience engages more with hands-on content), and ends with a question to drive comments.

After posting, the agent collects analytics — impressions, reactions, comments, profile views — and tracks performance over time. That data feeds back into understanding what resonates with the audience, which influences future topic selection. The feedback loop closes: distribution analytics inform the next round of ingestion priorities.

The Complete Picture

Here’s the full pipeline with all phases expanded — a single reference showing how everything connects.

The complete pipeline: five phases, their internal steps, and the feedback loops that make it iterative.

What This Changes

The pipeline doesn’t make me a better writer. It makes me a more consistent one.

Before the pipeline, I published when inspiration and free time aligned — maybe once a month. Now I publish Monday, Wednesday, Friday. Not because I have more time. Because the 80% of the work that isn’t writing is handled.

An example: in the week of May 4 — an unusually productive week — I published [The Bottleneck Moved][3] (a productivity piece), [The 732-Byte Wake-Up Call][4] (a deep-dive into a Linux kernel exploit and AI-driven vulnerability discovery), [The Agent Security Stack][5] (an architecture post), [Is the AI Subsidy Era Ending?][6] (an economics analysis), and [Code Quality Is the New Infrastructure][7] (an engineering practices argument). Five posts, five different angles, one week. The standard cadence is three, but the pipeline made five possible without any of them feeling rushed — because each went through the full pipeline.

The quality goes up too. Every post gets a review process — challenging questions, fact-checking, link verification — that I would never do manually for a weekly post. The automation makes thoroughness sustainable.

And the feedback loop tightens. When you publish three times a week, you learn fast what resonates. The analytics agent shows me which topics drive engagement, which formats work, which hooks land. That signal was invisible when I published monthly.

The Key Insight

This isn’t “AI writes my blog posts.”

Read that again, because it’s the most common misconception.

The creative core — the thesis, the argument, the voice, the editorial judgment — is human. The argument and voice of every post is mine. The agent never generates the first draft. It never decides what’s worth writing about. It never chooses the angle.

What the agent does is remove every obstacle between having something to say and saying it publicly. The research that would take hours. The review that I’d skip. The formatting I’d procrastinate on. The publishing steps I’d forget. The distribution I’d neglect.

The agent handles the pipeline. The human handles the thinking.

That division of labor is what turns “I should write about that” into a published post, three times a week, every week.

Even If You Never Hit Publish

Maybe you’re reading this thinking: “I don’t want to publish. I’m not building a personal brand. I don’t need a content pipeline.”

You might still need this pipeline. Just not for the reason you think.

There’s a principle often attributed to Richard Feynman: if you can’t explain something in plain language, you don’t actually understand it. Writing is the most rigorous form of that test. When you try to explain a concept in writing, every gap in your understanding becomes visible. The hand-wavy parts you glossed over in your head suddenly need actual sentences. The “I kind of get it” becomes “wait, do I actually know how this works?”

The pipeline I described isn’t just a publishing machine. It’s a learning machine.

Run through the research phase on a topic you think you understand. Let the agent challenge your claims against primary sources. You’ll find gaps. Generate the challenging questions a skeptical reader would ask. Try to answer them. You’ll find more gaps. Write the explanation as if someone else needs to understand it. The gaps become canyons.

You don’t have to publish the result. The value is in the process:

- Research forces precision. “I think it works like X” becomes “the documentation says it works like X, except in cases Y and Z.”

- Writing forces clarity. Vague understanding survives in your head. It doesn’t survive on the page.

- Review forces honesty. When the agent asks “what evidence supports this claim?” and you don’t have any, that’s a learning moment.

- Challenging questions force depth. The questions you can’t answer are exactly the areas where your understanding is shallow.

Some of the most valuable runs through this pipeline never become blog posts. They become better architecture decisions, sharper customer conversations, and deeper expertise that shows up in everything else you do.

Publishing is optional. Understanding isn’t.

The Elephant in the Room

The question nobody wants to ask: in a world flooded with AI-generated content, does publishing more actually help?

I’m aware of the irony. This is a post about using AI to create content — itself a piece of content that was created with AI assistance. We’re approaching what Tom Fishburne calls “content about content about content” [1] — the circular creator economy where everyone is writing about writing, and AI tools accelerate the flood.

Mark Schaefer’s concept of “Content Shock” [2] — the point where exponentially increasing content volume exceeds human capacity to consume it — was already real before AI entered the picture. Now every professional with access to a language model can publish daily. P&G’s Chief Brand Officer Marc Pritchard put it bluntly: “we fell into the content crap trap.”

So why add to the pile?

Because the problem isn’t volume. It’s signal-to-noise ratio. The flood of AI-generated content makes human-authored, experience-backed, technically verified content more valuable, not less. When anyone can generate a 2,000-word post about Kubernetes security in thirty seconds, the post that’s backed by actual field experience, verified against primary sources, and stress-tested with challenging questions stands out more than it did before.

The pipeline I described doesn’t lower the bar for publishing. It raises it. Every post goes through more rigorous verification than most manually-written content ever receives. The automation isn’t replacing quality — it’s making quality sustainable at a cadence that keeps up with the conversation.

That said, I hold this belief lightly. The pipeline doesn’t force output. Every article starts with an idea — either one I generate myself or one the agent proposes based on ingested content and prior work. In both cases, I decide whether it’s worth pursuing. If that judgment says “not this week,” the pipeline stays idle. The cadence is a ceiling, not a quota.

If genuine ideas come less often than three times a week, so be it. The pipeline makes it possible to publish at that pace. It doesn’t obligate it. The threshold is always: is this good enough that I’d want to read it myself? Is this genuine? Does this add signal, not noise?

The honest answer: I don’t know yet whether 3x/week is the right cadence for the long term. I know it’s working now. The pipeline makes it easy to dial back when it stops working.

What’s Next

This post described the pipeline conceptually. In upcoming posts, I’ll dive into the implementation:

- How the ingestion layer monitors trusted sources and surfaces connections

- How the research agent verifies claims against a trust hierarchy

- How collaborative writing with an agent improves your drafts without losing your voice

- How automated editing generates challenging questions and catches errors

- How the publishing pipeline deploys a post in minutes, not hours

Each of those phases has lessons that apply beyond content creation. The patterns — trust hierarchies for verification, human-in-the-loop gates for quality, automated QA for consistency — are the same patterns you’d use for any AI-augmented workflow.

You don’t need to build all five phases at once. Start with research and review — those require nothing but a conversation with an AI model — and add automation as the friction becomes obvious.

The tools are here. The question is whether you’ll build the pipeline.

Sources

[1] Tom Fishburne: Content about Content about Content (2026)

[2] Mark Schaefer: Content Shock (2014)

[5] The Agent Security Stack Nobody Is Building

[6] Is the AI Subsidy Era Ending?

[7] Code Quality Is the New Infrastructure

❤️ Created with the support of AI (Kiro)