🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

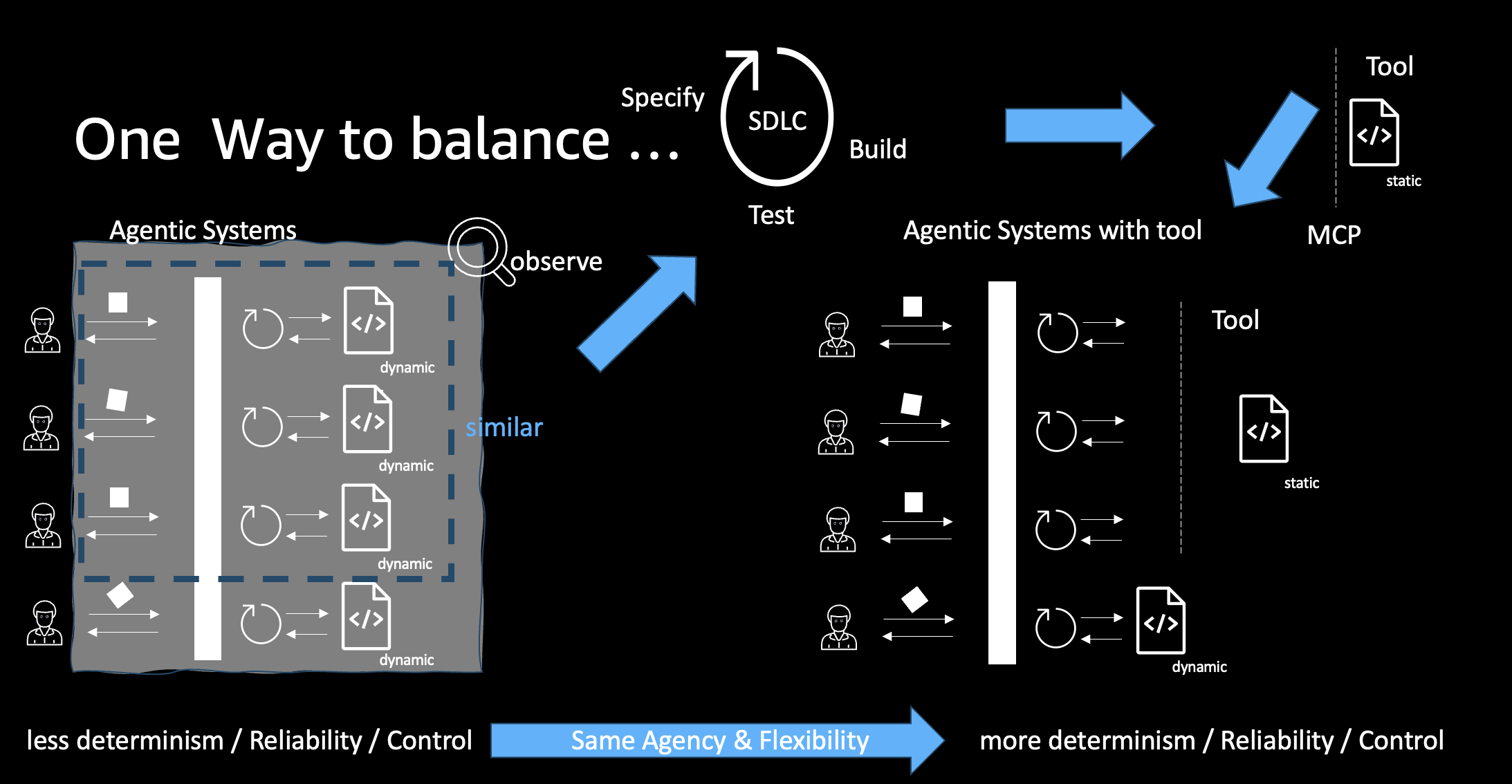

⚖️ We need to balance Agency versus Control. We want AI systems to be super easy to use, read our minds, and just provide the answer we need. But we also need to make sure that nothing goes wrong. The more we control, the less agency we get. This is a balancing act.

Let’s focus on the control part. There are many different mechanisms to increase and guarantee control. Things like policies and guardrails come to mind. Those are obvious and powerful. I will cover them in a dedicated post.

But there are more options, and they are all complementary. We will now explore a mechanism that was introduced for a different purpose: to improve the abilities of agents in the first place. An inflection point for building agents that perform well at a variety of tasks is the idea of equipping them to create and execute code. But we’re getting ahead of ourselves. Let’s start with traditional systems.

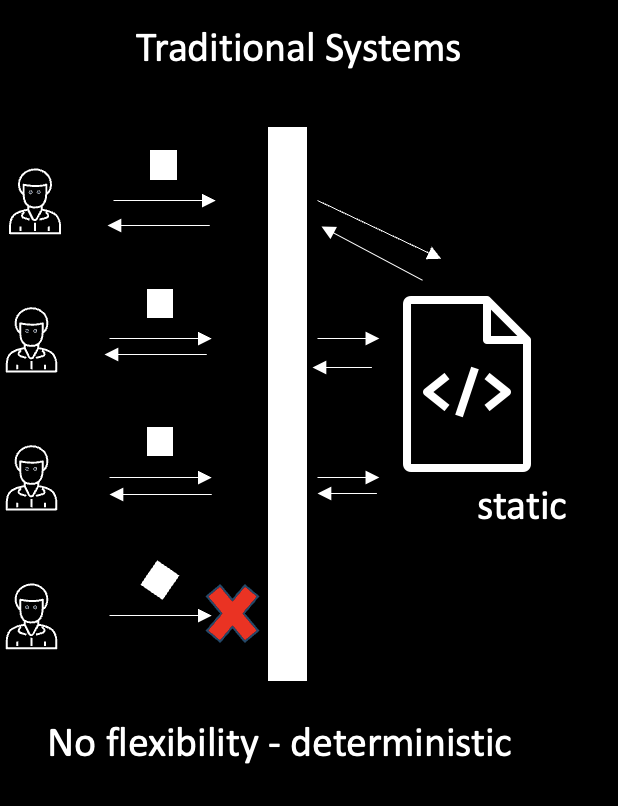

Traditional Systems

Those systems expose an API and have some static implementation that can be triggered via the API. Depending on the programming language, they might be compiled to an executable at build time or just a script that executes on invocation via the API. In both cases, the code will not change with a version of the software regardless of which kind of API call is made into the system.

What we can see here in the diagram is that those systems are missing some flexibility. Requests can only be served successfully if the input adheres to what the system has been designed and built for. Small variations can lead requests to fail, as the code doesn’t support answering this kind of request. This is the missing flexibility we’ve been used to, but with the rise of LLMs being able to “understand” arbitrary user input, it seems very limited.

On the positive side, the code of the system is built upfront, and all the software engineering practices like writing tests—or even applying TDD (Test Driven Development)—can be applied here to ensure that the systems are robust and provide the functionality they’re intended to without failure. We have a lot of control here because we decide in the design which functionality the system should provide and ensure the quality of the system.

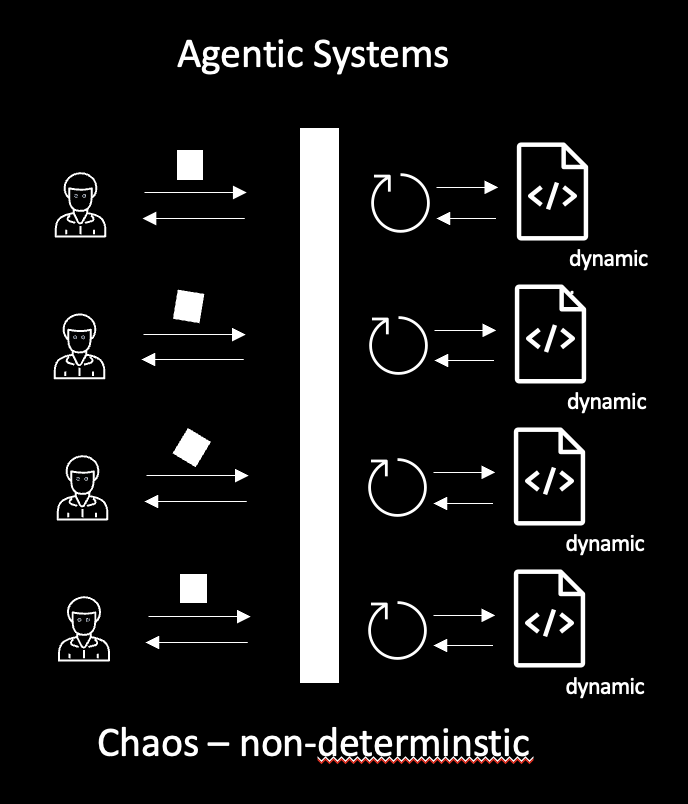

Agentic Systems in 2026

But in 2026, the world has changed. These days we are building more and more agentic systems. Those systems are very flexible in terms of which kind of input they can take. LLMs provide users with the ability to provide arbitrary inputs written in natural language. Early versions of those systems were very limited in their capabilities and often provided inaccurate, possibly hallucinated, and wrong answers. However, modern models—actually those are compound systems as I discussed in [1]—like the Amazon Nova 2 family [2], have the ability to generate code and execute the code in a safe environment (sandbox) to use the result of the execution as part of their reasoning process and consequently in their responses. This provides a huge increase in the quality of responses and opens a larger set of tasks those models can successfully execute.

As we see in the diagram above, those systems are super flexible and can work on a large set of different tasks. They take the input, reason about it, create a plan, and then potentially create code which is executed. While this is super flexible, it leads to a lot of code being created ad-hoc. This ad-hoc produced code is not created following all the software engineering best practices like the tests we discussed before. There is no human oversight, no review by another LLM. Typically this code is small compared to code bases of typical software projects, but it can still contain bugs. So we can’t really control here—neither what kind of code is being generated nor what the quality is. If you take a look at the details of the diagram, you’ll spot that the first and the last request are exactly the same. Still, the system will generate code for each individual invocation. As a result, the answer might well be varying. We can’t really control this.

There is another side effect to this. Each time we generate code, we need to consume resources to do so. The models will use tokens to generate the code, possibly multiple times until it generates a working version of the code. We pay for this both in terms of latency and computation cost.

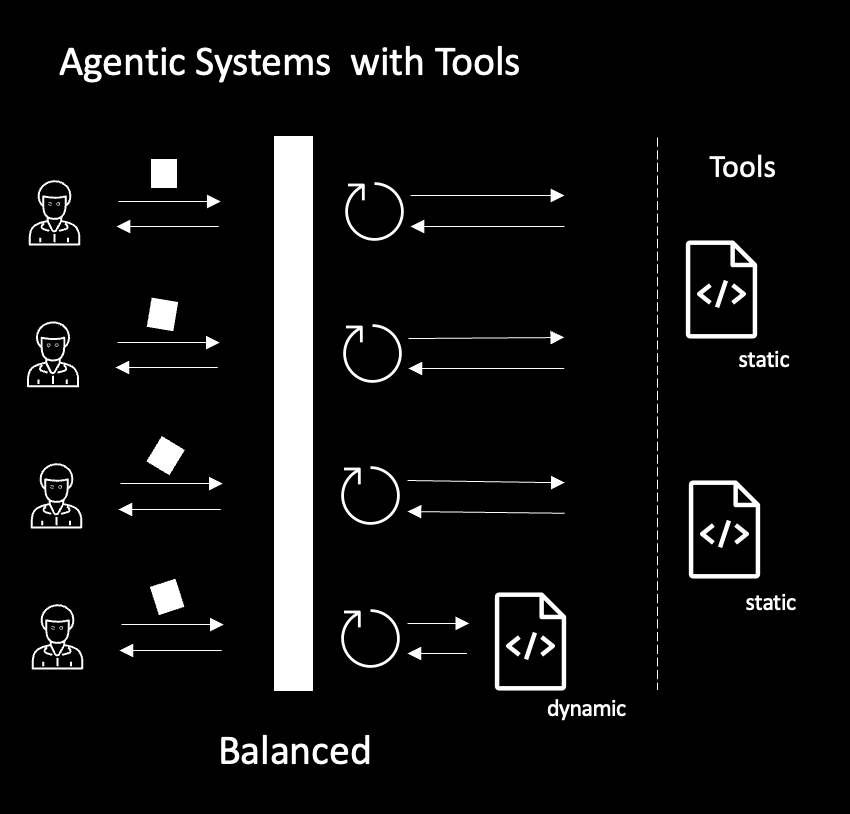

The Solution: Agentic Systems with Tools

How can we improve this? We want to keep the flexibility but don’t want to lose control.

If you are a software developer, you know an answer already. We are trained to put commonly used functionality into shared libraries that can be consumed in our code. For example, instead of every developer generating code to understand user attributes, we would build a function in a library that does this. Instead of multiple implementations that might slightly vary, use expensive development time, and possibly are not properly tested, we implement the functionality once and then just import this wherever we need it.

In AI agents, we have a similar mechanism. We call those libraries tools. With MCP [3], we have a standard that allows us to expose and integrate those tools to AI Agents. While building those tools, we can apply all the best practices of software engineering to improve quality. We can stay in control.

Agentic systems with tool. Maintaining Flexibility while gaining more control

Agentic systems with tool. Maintaining Flexibility while gaining more control

As you can see in the diagram above, the system has the same flexibility as the agentic system in the previous diagram. The difference is in the implementation. The system is reasoning about the incoming requests and uses prebuilt tools when appropriate to fulfill the request. Only in cases where it cannot find a tool would it try to build code on the fly and execute. So overall, the system responses become way more predictable, and we are able to improve the quality of the responses. We increased the level of control while we still have the same degree of flexibility. This is great, right?

Building Tools Efficiently

Building Agentic systems with tools

Building Agentic systems with tools

But now we again need to build so much software while those agentic systems did that automagically. Yes. No. If you think about it, there are two pieces of good news. Firstly, with technologies like Bedrock Agent Core Gateway [4] and MCP facades in API gateways [5], we can utilize existing software implementations already today. So by far we don’t start from scratch. Secondly, we can utilize AI to support us in building those tools. A very good way of doing so is using tools like Kiro [6] to ease and speed up building those tools. Spec-driven development enables you to stay really in control of what is being built, and features like property-based testing are a very good start to achieve high-quality software.

But how do I know which tools I should be building? Again, there are two answers to this. Firstly, this will be part of your system’s design. You would think about what kind of user requests the system should be able to respond to. This will guide you in the decision of which tools to build and integrate. Secondly, if you build systems with a good level of observability, you can observe your system and identify reoccurring patterns of requests and code being generated on the fly. Those are good candidates to be built as a tool. Think about automating the process as much as possible, and you eventually get to a system that constantly improves in terms of performance and reliability with incoming user requests.

Conclusion

So you just identified a way to keep better control of your AI Agents while improving performance over time. Your system will become more capable and resource efficient over time. Obviously this is a vision, but this is something we will be developing towards.

What do you think?

And now go build!

Resources

[1] Compound systems - https://www.linkedin.com/pulse/when-model-isnt-just-redefining-ai-systems-builders-era-christoph-k6v1e/

[2] Amazon Nova 2 Family - https://aws.amazon.com/nova/

[3] MCP Standard - https://modelcontextprotocol.io/docs/getting-started/intro

[4] Bedrock Agent Core Gateway - https://aws.amazon.com/blogs/machine-learning/introducing-amazon-bedrock-agentcore-gateway-transforming-enterprise-ai-agent-tool-development/

[5] MCP facades in API gateways - https://aws.amazon.com/about-aws/whats-new/2025/12/api-gateway-mcp-proxy-support/

[6] Kiro - https://kiro.dev/

Cross-posted to LinkedIn