On the Loop, Not In It — But Code Quality Still Matters

On the Loop, Not In It — But Code Quality Still Matters

Yesterday one of my AI agents wasted 15 minutes chasing a bug that didn’t exist. The function was called transformPayload() — but it didn’t transform anything. It validated. The agent built three layers of transformation logic on top of it before realizing the name was a lie. I’ve seen this pattern dozens of times now. And it’s exactly why I think Kief Morris’s latest piece gets the big picture right but undersells one critical detail.

Technology Evolution Doesn't Move in a Straight Line—It Spirals

Technology Evolution Doesn’t Move in a Straight Line—It Spirals

The Proud Ops Colleague

Years ago, an Ops colleague proudly showed me something new. ClusterSSH—cssh [1]. A tool that opens multiple terminals to multiple machines—at the same time. You type once, it executes everywhere.

Back then, machines still had names. Ops folks knew their history, their specs, their quirks. They could tell you which server had been acting up last Thursday and what firmware it was running. And cssh? It let them follow the runbook consistently across every node. No more SSH-ing into machines one by one, hoping you didn’t forget a step on node 7.

🔧 𝗧𝗵𝗲 𝗠𝗮𝗶𝗻𝘁𝗲𝗻𝗮𝗻𝗰𝗲 𝗧𝗿𝗮𝗽: 𝗪𝗵𝘆 𝗬𝗼𝘂𝗿 𝗜𝗧 𝗦𝘆𝘀𝘁𝗲𝗺𝘀 𝗔𝗿𝗲 𝗠𝗼𝗿𝗲 𝗟𝗶𝗸𝗲 𝗣𝗹𝗮𝗻𝘁𝘀 𝗧𝗵𝗮𝗻 𝗦𝘁𝗼𝗻𝗲𝘀

🔧 𝗧𝗵𝗲 𝗠𝗮𝗶𝗻𝘁𝗲𝗻𝗮𝗻𝗰𝗲 𝗧𝗿𝗮𝗽: 𝗪𝗵𝘆 𝗬𝗼𝘂𝗿 𝗜𝗧 𝗦𝘆𝘀𝘁𝗲𝗺𝘀 𝗔𝗿𝗲 𝗠𝗼𝗿𝗲 𝗟𝗶𝗸𝗲 𝗣𝗹𝗮𝗻𝘁𝘀 𝗧𝗵𝗮𝗻 𝗦𝘁𝗼𝗻𝗲𝘀

After years of watching organizations struggle with outdated systems, I’ve written about a pattern we all know too well—the maintenance trap in IT.

Here’s the uncomfortable truth: We’ve all seen those systems that haven’t been updated in years. Aging interfaces, accumulating bugs, mounting security risks. We assess the cost of updates, weigh the business value, and often decide to “just skip this one.”

IT System Maintenance in the age of AI

IT System Maintenance in the age of AI

Introduction - The Maintenance Trap in IT

You don’t need to be in the IT industry for long to have witnessed this firsthand. Even non-IT users do. Those systems that haven’t been maintained for ages. From a user perspective, you “just” see a maybe aged user interface, non-evolving features, and old bugs or quirks become accepted by, possibly generations of, users. From a user perspective, you should have an eye on this. Often, this not only means that the system becomes cumbersome to use, but it also means that there are possibly no security updates being made. We will see just in a bit that it might even not be possible anymore. So think about which kind of data you want to put in there.

Passing on control to your AI coding agent team entirely?

Passing on control to your AI coding agent team entirely?

Anthropic researcher Nicholas Carlini conducted a stress test of their Claude Opus 4.6 model by deploying 16 parallel AI agents to build a complete C compiler in Rust from scratch(https://lnkd.in/eGMp4b2K). Over approximately two weeks and nearly 2,000 Claude Code sessions, the agents autonomously produced a 100,000-line compiler capable of compiling the Linux 6.9 kernel across multiple architectures (x86, ARM, and RISC-V). The experiment cost around $20,000 in API fees and demonstrated that coordinated AI agent teams can tackle complex systems programming challenges traditionally requiring significant human expertise and architectural oversight.

Kiro Subagents: Scaling Development with Specialized AI Agents

Kiro Subagents: Scaling Development with Specialized AI Agents

When you’re building complex software, context management becomes your bottleneck. Your AI agent is juggling frontend components, backend APIs, database schemas, testing frameworks, and documentation—all competing for limited context window space. The result? Diluted focus and suboptimal outputs.

Kiro Subagents solve this architectural challenge by enabling parallel task execution through specialized, autonomous agents that maintain independent context windows.

🏗️ The Architecture: Parallel Contexts, Focused Execution

Subagents operate as independent processes with their own context management. This architectural pattern delivers several technical advantages:

🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

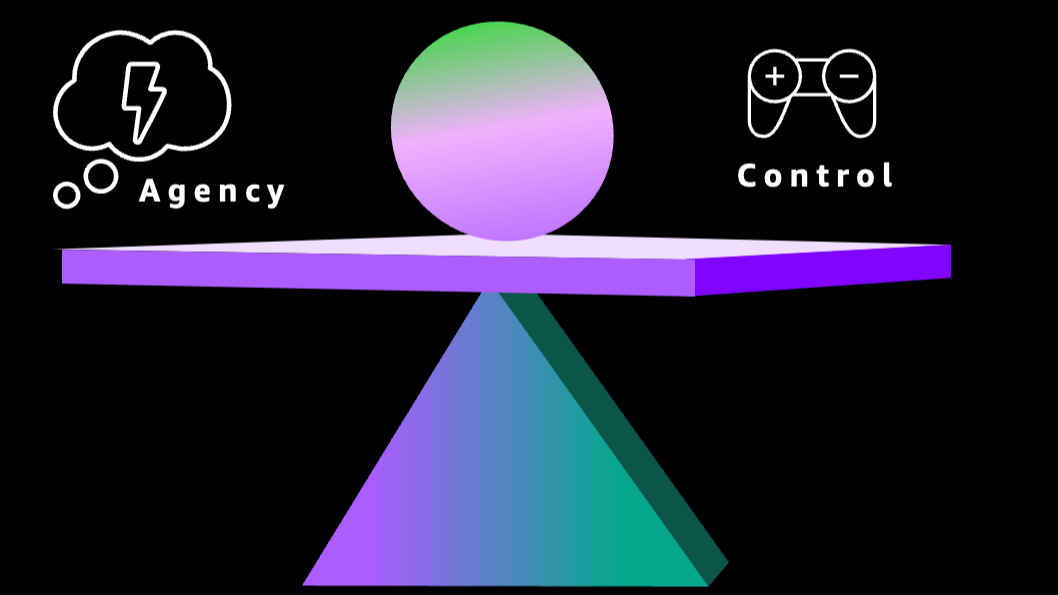

⚖️ We need to balance Agency versus Control. We want AI systems to be super easy to use, read our minds, and just provide the answer we need. But we also need to make sure that nothing goes wrong. The more we control, the less agency we get. This is a balancing act.

Let’s focus on the control part. There are many different mechanisms to increase and guarantee control. Things like policies and guardrails come to mind. Those are obvious and powerful. I will cover them in a dedicated post.

🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

Agentic systems are incredibly flexible, but ad-hoc code generation means unpredictable results and wasted resources. How do we fix this without losing the magic? The answer lies in tools—prebuilt, tested, reusable components that make your AI agents more capable, reliable, and cost-efficient with every interaction.

With the right approach, your agents can become smarter and more efficient over time. Dive deep in the article below.

When a 'Model' Isn't Just a Model: Redefining AI Systems for the Builder's Era

When a ‘Model’ Isn’t Just a Model: Redefining AI Systems for the Builder’s Era

🎬 Great keynote by Jensen Huang at CES 2026 [1]! Great content and also love the ease of his presentation style. Miguel: We are not the only ones presenting in front of a black screen once in a while ;)

🔓 I agree with Jensen, it’s super exciting to see more and 𝗺𝗼𝗿𝗲 𝗼𝗽𝗲𝗻-𝗶𝘀𝗵 𝗳𝗿𝗼𝗻𝘁𝗶𝗲𝗿 𝗺𝗼𝗱𝗲𝗹𝘀 𝗯𝗲𝗶𝗻𝗴 𝗽𝘂𝗯𝗹𝗶𝘀𝗵𝗲𝗱 by different providers. Sounds like NVIDIA is taking a big stake in this. Really key for me is that providers not “just” release open-weight models but also the data they trained on and the process used to train them. Jensen mentions the obvious responsible AI argument which is super important. This is the only way 3rd parties can verify the models and understand things like bias being introduced by the training data, copyright infringements, and alike. From my perspective, equally important: 𝗢𝗽𝗲𝗻 𝗶𝘀 𝗼𝗻𝗹𝘆 𝘁𝗿𝘂𝗹𝘆 𝗼𝗽𝗲𝗻 𝘁𝗼 𝗺𝗲 𝗶𝗳 𝗜 𝗰𝗮𝗻 𝗯𝘂𝗶𝗹𝗱 𝗶𝘁, 𝗺𝗼𝗱𝗶𝗳𝘆 𝗶𝘁 𝘁𝗼 𝗺𝗮𝗸𝗲 𝗺𝘆 𝗼𝘄𝗻 𝘃𝗮𝗿𝗶𝗮𝗻𝘁, 𝗮𝗻𝗱 𝗜’𝗺 𝗮𝗹𝗹𝗼𝘄𝗲𝗱 𝘁𝗼 𝗱𝗼 𝘀𝗼.

🌅 The Dawn of the Renaissance Developer

🌅 The Dawn of the Renaissance Developer

It’s that time of the year. AWS Community gets ready for the event of the year: re:Invent. And Werner publishes his tech predictions [1]. Like every year, a densely packed piece with loads of gems in it. This year Werner came up with 5 major themes, if I didn’t miscount. I covered the first one in my initial post [2].

The second one is about: