🎯 'How do we pick the RIGHT AI agent use case?

🎯 “How do we pick the RIGHT AI agent use case?

This is the question I hear most from customers exploring agentic AI.

Here’s the mechanism I run through together with the customer:

The 4-Quadrant Evaluation

When a customer brings me 5-10 agent ideas, we structure each one across four dimensions:

📊 Business Value & Strategic Fit → What pain does it solve? For whom? How often? → Can we quantify the impact? (Revenue, cost, time, quality) → Which KPI moves if this works for 6 months?

Passing on control to your AI coding agent team entirely?

Passing on control to your AI coding agent team entirely?

Anthropic researcher Nicholas Carlini conducted a stress test of their Claude Opus 4.6 model by deploying 16 parallel AI agents to build a complete C compiler in Rust from scratch(https://lnkd.in/eGMp4b2K). Over approximately two weeks and nearly 2,000 Claude Code sessions, the agents autonomously produced a 100,000-line compiler capable of compiling the Linux 6.9 kernel across multiple architectures (x86, ARM, and RISC-V). The experiment cost around $20,000 in API fees and demonstrated that coordinated AI agent teams can tackle complex systems programming challenges traditionally requiring significant human expertise and architectural oversight.

AI coding has quickly developed from an interesting research project to an important tool in the bel

AI coding has quickly developed from an interesting research project to an important tool in the belt of every software developer. Tools like #kiro allow to define subagents, which take on specific responsibilities within the software project and speed up development and improve quality. Nice way to navigate overcrowded context windows.

But where to start? How to identify subagents which can improve the team and subsequently - how to come up with a first version of those agents?

Kiro Subagents: Scaling Development with Specialized AI Agents

Kiro Subagents: Scaling Development with Specialized AI Agents

When you’re building complex software, context management becomes your bottleneck. Your AI agent is juggling frontend components, backend APIs, database schemas, testing frameworks, and documentation—all competing for limited context window space. The result? Diluted focus and suboptimal outputs.

Kiro Subagents solve this architectural challenge by enabling parallel task execution through specialized, autonomous agents that maintain independent context windows.

🏗️ The Architecture: Parallel Contexts, Focused Execution

Subagents operate as independent processes with their own context management. This architectural pattern delivers several technical advantages:

🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

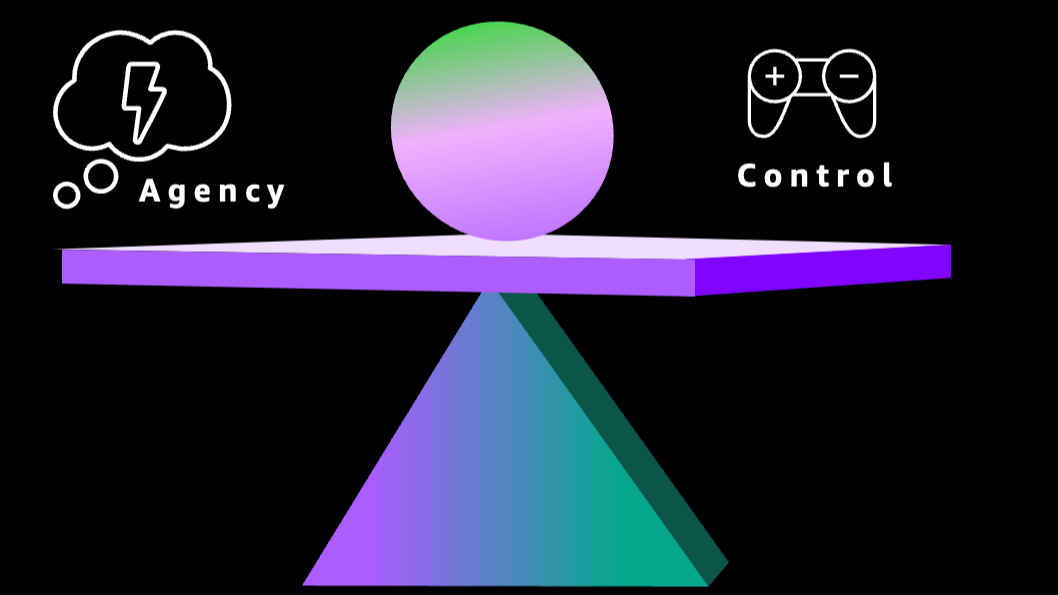

⚖️ We need to balance Agency versus Control. We want AI systems to be super easy to use, read our minds, and just provide the answer we need. But we also need to make sure that nothing goes wrong. The more we control, the less agency we get. This is a balancing act.

Let’s focus on the control part. There are many different mechanisms to increase and guarantee control. Things like policies and guardrails come to mind. Those are obvious and powerful. I will cover them in a dedicated post.

🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

🎯 From Chaos to Control: Building Predictable AI Agents That Get Smarter Over Time

Agentic systems are incredibly flexible, but ad-hoc code generation means unpredictable results and wasted resources. How do we fix this without losing the magic? The answer lies in tools—prebuilt, tested, reusable components that make your AI agents more capable, reliable, and cost-efficient with every interaction.

With the right approach, your agents can become smarter and more efficient over time. Dive deep in the article below.

😱 𝗔𝗿𝗴𝗵 - 𝗠𝘆 𝗔𝗜 𝗔𝗴𝗲𝗻𝘁 𝗱𝗲𝗹𝗲𝘁𝗲𝗱 𝗮𝗹𝗹 𝗺𝘆 𝗳𝗶𝗹𝗲𝘀!!!! Worried about AI agents running amok with your data? B

😱 𝗔𝗿𝗴𝗵 - 𝗠𝘆 𝗔𝗜 𝗔𝗴𝗲𝗻𝘁 𝗱𝗲𝗹𝗲𝘁𝗲𝗱 𝗮𝗹𝗹 𝗺𝘆 𝗳𝗶𝗹𝗲𝘀!!!! Worried about AI agents running amok with your data? Before panicking, consider this: we’ve been solving permission and access control problems for decades with human coworkers. Let’s apply those same principles to our new AI teammates and find the right balance between agency and control. #AIAgents #FutureOfWork

Argh - My AI Agent deleted all my files

😱 “Argh - My AI Agent deleted all my files!!!!”

When was the last time one of your co-workers deleted an important file from your desktop?

I would hope it has been a long time ago and quite possibly never.

🖥️ Even at the time we used shared computers, home directories have been separated.

There was a notion of shared folders or network folders or whatever nomenclature the system you were using. So in order to provide access to files to coworkers, you would need to take the decision first to upload it to a shared folder.

When a 'Model' Isn't Just a Model: Redefining AI Systems for the Builder's Era

When a ‘Model’ Isn’t Just a Model: Redefining AI Systems for the Builder’s Era

🎬 Great keynote by Jensen Huang at CES 2026 [1]! Great content and also love the ease of his presentation style. Miguel: We are not the only ones presenting in front of a black screen once in a while ;)

🔓 I agree with Jensen, it’s super exciting to see more and 𝗺𝗼𝗿𝗲 𝗼𝗽𝗲𝗻-𝗶𝘀𝗵 𝗳𝗿𝗼𝗻𝘁𝗶𝗲𝗿 𝗺𝗼𝗱𝗲𝗹𝘀 𝗯𝗲𝗶𝗻𝗴 𝗽𝘂𝗯𝗹𝗶𝘀𝗵𝗲𝗱 by different providers. Sounds like NVIDIA is taking a big stake in this. Really key for me is that providers not “just” release open-weight models but also the data they trained on and the process used to train them. Jensen mentions the obvious responsible AI argument which is super important. This is the only way 3rd parties can verify the models and understand things like bias being introduced by the training data, copyright infringements, and alike. From my perspective, equally important: 𝗢𝗽𝗲𝗻 𝗶𝘀 𝗼𝗻𝗹𝘆 𝘁𝗿𝘂𝗹𝘆 𝗼𝗽𝗲𝗻 𝘁𝗼 𝗺𝗲 𝗶𝗳 𝗜 𝗰𝗮𝗻 𝗯𝘂𝗶𝗹𝗱 𝗶𝘁, 𝗺𝗼𝗱𝗶𝗳𝘆 𝗶𝘁 𝘁𝗼 𝗺𝗮𝗸𝗲 𝗺𝘆 𝗼𝘄𝗻 𝘃𝗮𝗿𝗶𝗮𝗻𝘁, 𝗮𝗻𝗱 𝗜’𝗺 𝗮𝗹𝗹𝗼𝘄𝗲𝗱 𝘁𝗼 𝗱𝗼 𝘀𝗼.

✨ It has never been a better time to be excited about the future.

✨ “It has never been a better time to be excited about the future.”

🔍 I missed this interview back in October last year when Jeff Bezos compared today’s AI boom to the internet bubble of the 2000s at Italian Tech Week 2025 [1]. He warned of hype but insisted AI is “real” and will transform every industry. In the interview, he explained why industrial bubbles can benefit society and predicted that AI will raise both productivity and quality worldwide.