Is RAG Still Needed with 1M+ Token Context Windows?

The Kofferklausur, Revisited

In September 2024, a colleague asked an audience: “What is RAG?” I answered: Kofferklausur [1].

For non-German speakers: a Kofferklausur is an open-book exam. You bring your textbooks, notes, everything. The exam doesn’t test what you memorized — it tests whether you can find the right information and reason about it under pressure.

That analogy stuck with me. A foundation model is the student. RAG is the suitcase full of books. The model doesn’t need to memorize every fact — it needs to know how to find the right one and reason about it. Special-purpose tools beat the Swiss Army knife.

There’s one important difference, though: in an open-book exam, the student knows the questions. In production, queries are unpredictable — which actually strengthens the case for retrieval. You can’t pre-load everything into context when you don’t know what will be asked.

Eighteen months later, the exam has changed. The student got smarter (1M+ token context windows). The suitcase got better (agentic RAG, contextual retrieval). And now there’s something new on the desk: notes from every previous exam — agent memory.

The Question That Keeps Coming Up

Every few weeks, a customer asks: “Context windows are 1 million tokens now. Do we still need RAG?”

It’s a fair question. When Gemini offers 2 million tokens and Claude handles 1 million with no long-context surcharge, the idea of stuffing everything into the context window is tempting. No retrieval pipeline, no vector database, no chunking strategy. Just dump it all in and ask.

The Short Answer

Yes, RAG is still needed. But it’s no longer the whole story.

The long-context vs RAG debate is a false dichotomy. They solve different problems — and agent memory adds a third dimension the original debate missed entirely.

Where Long Context Wins

Long context windows genuinely shine for:

- Single-document deep analysis — legal contracts, research papers, codebases. When you need the model to reason across an entire document, stuffing it into context works.

- Multi-turn conversations with history — keeping the full conversation in context avoids the “goldfish memory” problem.

- Cross-referencing within a bounded set — comparing 5-10 documents where all the information fits.

The NIAH (Needle in a Haystack) benchmarks show near-perfect retrieval for synthetic tests [2]. Models can find a specific fact buried in hundreds of thousands of tokens.

Where RAG Still Wins

But NIAH benchmarks are misleading for real-world use. The LaRA benchmark — testing on actual enterprise documents, not synthetic needles — shows significant degradation as context grows [3]. Real documents have ambiguity, contradiction, and nuance that synthetic tests don’t capture. RAG achieves an 82.58% win-rate over long-context LLMs in these more realistic evaluations [4].

RAG remains essential for:

- Enterprise-scale data — no context window fits a company’s entire knowledge base. A 1M token window holds roughly 750K words — impressive, but a mid-size company’s documentation exceeds this by orders of magnitude.

- Cost efficiency — processing 1M tokens per query is expensive. RAG retrieves only the relevant chunks, keeping costs proportional to the answer, not the corpus. (Though for low-volume, high-value queries like executive briefings, the fixed infrastructure cost of RAG may exceed long-context costs — the break-even depends on query volume and corpus size.)

- Freshness — context windows contain static snapshots. RAG pipelines can index new documents in real-time.

- Precision — retrieval with good embeddings and reranking often surfaces more relevant content than hoping the model attends to the right section of a massive context.

- Auditability — RAG provides citations. You can trace which documents informed the answer. Long context is a black box.

The Third Dimension: Agent Memory

The student’s toolkit: a suitcase of books (RAG), a notebook from previous exams (memory), and a brain that holds a million words (long context).

Here’s what the original RAG-vs-long-context debate misses: neither approach handles personal context — what happened in previous conversations, what the user prefers, what decisions were made last week.

Back to our Kofferklausur analogy. The student now has three knowledge sources:

- What they studied — training data and long context (the brain)

- The books on the desk — RAG, authoritative external knowledge (the suitcase)

- Notes from every previous exam — agent memory, personal context and learned patterns (the notebook)

Amazon Bedrock AgentCore Memory makes this explicit. The AWS documentation draws a clean line [5]: long-term memory answers “who is the user and what happened before” while RAG answers “what do trusted sources say currently.” Memory handles personal context and session continuity. RAG handles factual grounding. They’re complementary, not competing.

This matters because the most capable AI systems aren’t just retrieving facts — they’re building relationships. An agent that remembers your architecture preferences, your team’s naming conventions, and the decision you made three sprints ago is fundamentally more useful than one that starts fresh every conversation.

One caveat: the memory feedback loop is powerful but not self-correcting. If an agent incorrectly remembers a past decision, that error compounds across sessions. Unlike RAG — where you can update source documents — memory errors are harder to detect. AgentCore Memory provides APIs to manage records, but detecting incorrect memories is still largely manual. This is an active area of development.

Minimal Sufficient Abstraction

PwC’s Chronos system recently scored 95.6% on LongMemEval — the highest score ever recorded for conversational AI memory [6]. The design principle is counterintuitive: most memory architectures try to structure everything upfront — knowledge graphs, entity extraction, elaborate representations. Chronos does the opposite. It leaves conversations as raw turns, retrievable through standard dense search. The only thing it structures is time.

Matt Wood calls this “minimal sufficient abstraction” — defer abstraction until you know what abstraction is needed. The system that structures less outperforms the systems that structure more. By nearly 7 percentage points.

Peter Tilsen raises a valid counterpoint: Chronos excels at temporal retrieval but leaves open the question of cross-connecting “temporarily unrelated items” — things memorized today that gain significance later through unexpected connections [8]. It solves one memory problem well but doesn’t address the full spectrum of cognitive capability. The honest answer: no single system does yet.

This reinforces the broader pattern: the best architectures don’t try to solve everything with one approach. They use the right tool for each dimension — long context for reasoning, RAG for factual retrieval, structured temporal indexing for memory — and compose them.

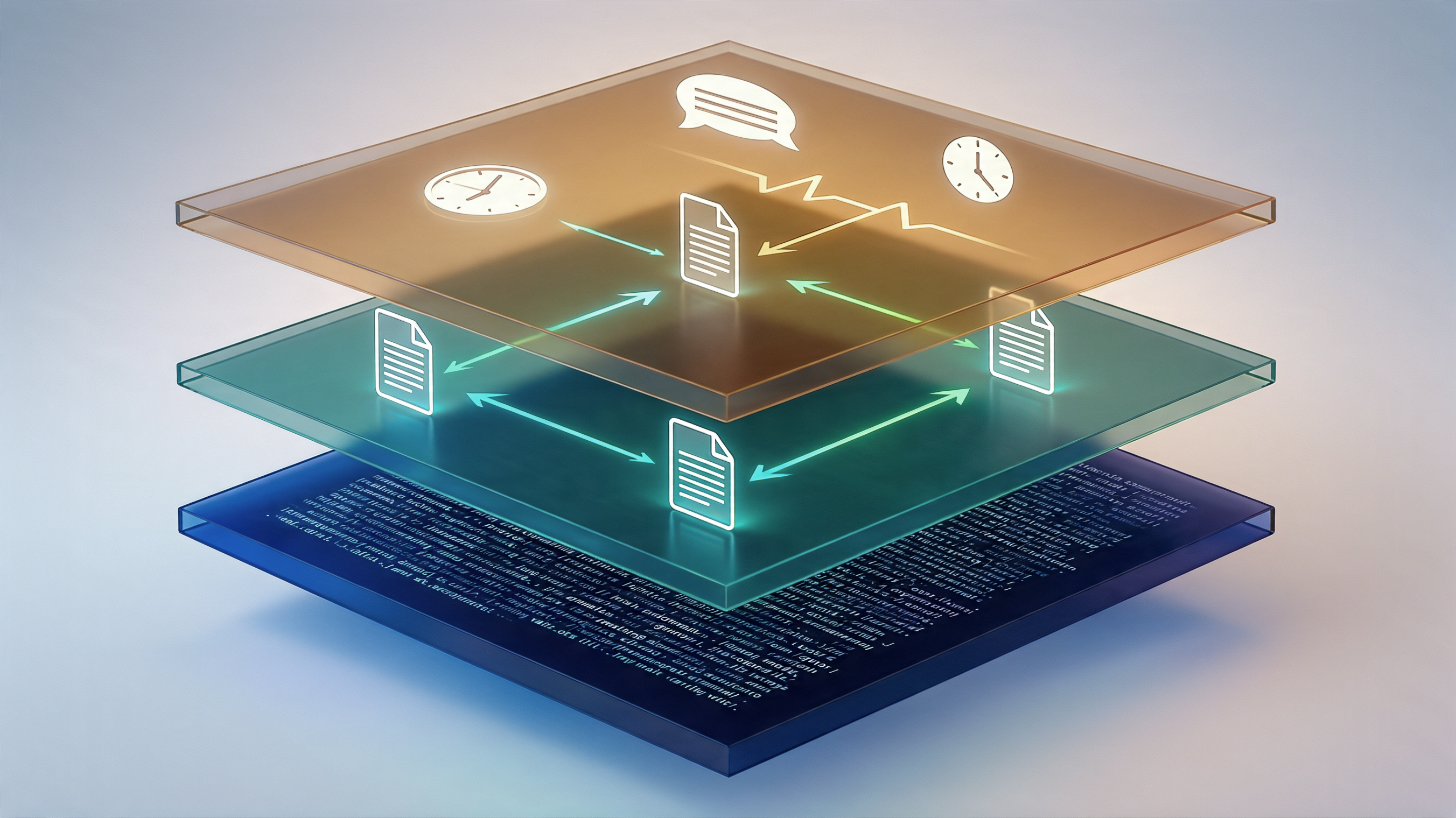

The Hybrid Architecture

Three knowledge layers: long context (blue), RAG retrieval (green), agent memory (amber). The best architectures compose all three.

The emerging consensus — and what I recommend to customers — is a three-layer approach:

- RAG for retrieval — find the relevant documents from a large corpus

- Long context for reasoning — load the retrieved documents into a generous context window for deep analysis

- Agent memory for continuity — remember what happened before, what the user prefers, what decisions were made

On AWS, this maps to: Amazon Bedrock Knowledge Bases for the retrieval layer, a long-context model (Claude, Nova) for reasoning, AgentCore Memory for persistent context, and Bedrock Guardrails for output validation.

The three-layer hybrid: RAG retrieves facts, Memory provides personal context, Long Context enables deep reasoning.

The Decision Framework

| Scenario | Best Approach | Why |

|---|---|---|

| Single long document (contract, paper) | Long context | Holistic reasoning across the full document |

| Repeated queries over large corpus | RAG | Index once, retrieve cheaply per query |

| Enterprise knowledge base (TB+ of docs) | RAG | No context window fits it all |

| Real-time / frequently updated data | RAG | Re-indexing beats re-prompting |

| Personal context across sessions | Agent Memory | Preferences, decisions, history |

| Complex multi-part enterprise queries | Agentic RAG | Dynamic query decomposition, iterative retrieval |

| The full picture | All three | Memory + RAG + long context, composed |

The Real Question

The student of 2026: books, notes from every previous exam, and a brain that holds a million words.

The question isn’t “RAG or long context?” anymore. It’s: how does your agent decide which knowledge source to use for each sub-task?

The Kofferklausur student of 2024 brought books. The student of 2026 brings books, notes from every previous exam, and a brain that can hold a million words at once. The exam hasn’t gotten easier — but the toolkit has gotten dramatically better.

My own thinking has evolved with it. In 2024, I defended RAG against the “it’s just a workaround” crowd [1]. That position was correct for 2024 — 200K context windows, no managed agent memory. The three-layer architecture I’m describing now reflects new capabilities, not a change of mind. The principle remains: special-purpose tools beat the Swiss Army knife. We just have more tools now.

The architecture should match the problem, not the hype cycle.

💬 How are you balancing RAG, long context, and agent memory in your architectures? What’s working — and what’s still missing?

Sources:

[1] My earlier post on RAG as Kofferklausur (Sep 2024): https://www.linkedin.com/feed/update/urn:li:share:7244647832249331712

[2] NIAH benchmark results — Unified RAG and LLM Evaluation for Long Context: https://arxiv.org/html/2503.00353v1

[3] LaRA: Benchmarking RAG and Long-Context LLMs on real enterprise documents: https://arxiv.org/html/2502.09977

[4] RAG achieves 82.58% win-rate over long-context LLMs in NIAH tasks: https://arxiv.org/html/2503.00353v1

[5] AWS — Compare Long-Term Memory with RAG: https://docs.aws.amazon.com/bedrock-agentcore/latest/devguide/memory-ltm-rag.html

[6] Matt Wood on PwC’s Chronos memory system (95.6% on LongMemEval): https://www.linkedin.com/posts/themza_pwc-just-achieved-the-highest-score-ever-activity-7441487446096760832

[7] Alex Wang — Is RAG Still Needed?: https://www.linkedin.com/pulse/rag-still-needed-alex-wang-m3mgc/

[8] Peter Tilsen’s commentary on Chronos and cross-temporal memory: https://www.linkedin.com/posts/petertilsen_memory-agenticmemory-activity-7441887446096760832