Software Fundamentals Matter More Than Ever

The Talk That Confirmed What I’ve Been Seeing

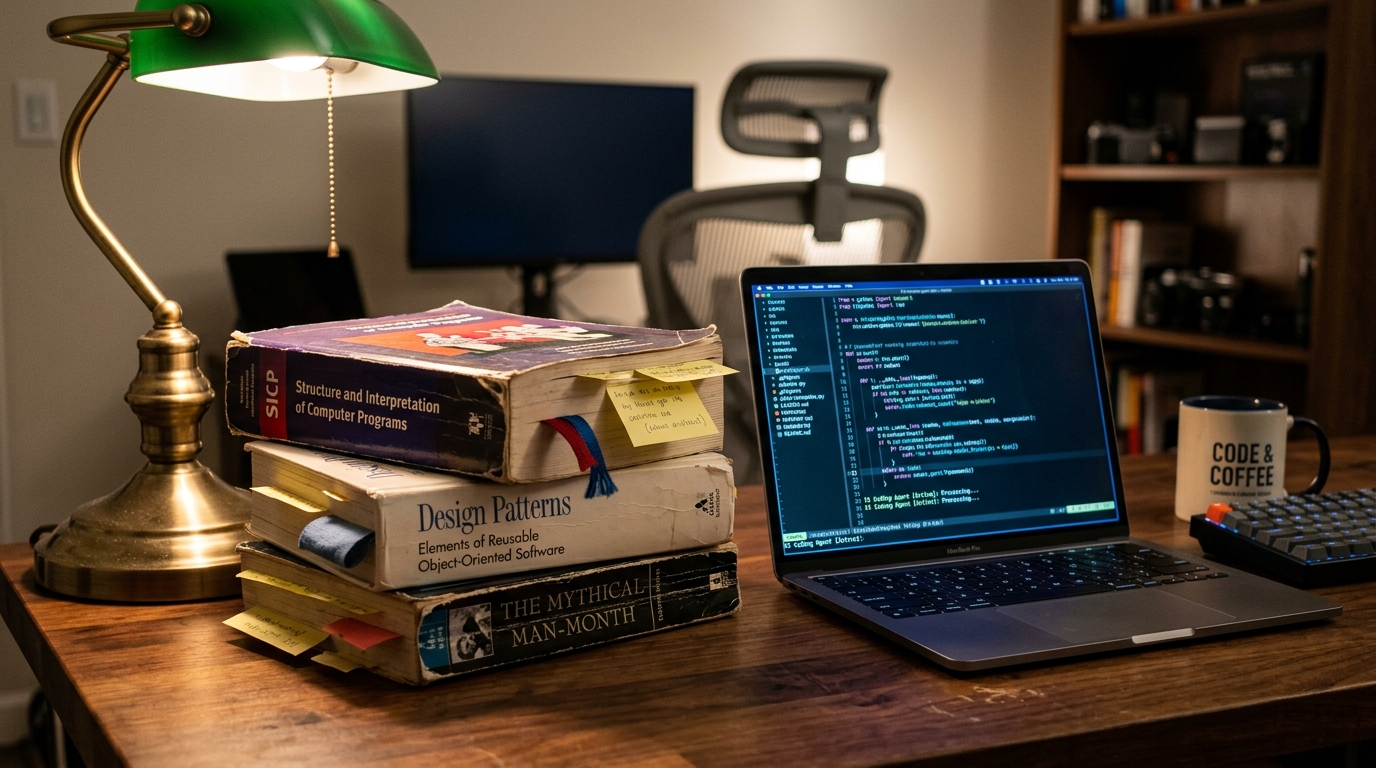

The books haven’t changed. The principles haven’t changed. The context has.

Matt Pocock stood on stage at the AI Engineer Summit and said something that most of the audience needed to hear: the developers who succeed with AI coding agents aren’t the ones who delegate everything. They’re the ones who fall back on engineering fundamentals [1].

After 18 months of teaching developers to build with AI agents through his “Claude Code for Real Engineers” course, Pocock has watched the same patterns emerge. The skills that matter aren’t new. They’re decades old. And they didn’t break when AI arrived. They got more important.

I’ve been making a similar argument in my writing on agentic coding [2][3], but Pocock brings something I don’t have: data from hundreds of students hitting the same walls. His failure modes are a practitioner’s field guide to what goes wrong, and his fixes are all rooted in books published before most AI researchers were born.

The Specs-to-Code Trap

Pocock opens with the “specs to code” movement: write a specification, run the AI compiler, get code. If there’s a problem, change the spec, run the compiler again. Don’t look at the code.

He tried it. The first run produced code. The second run produced worse code. The third run produced garbage.

His diagnosis: this is vibe coding by another name. And the idea that “code is cheap,” that you can just regenerate it, is dangerously wrong. Bad code is the most expensive it’s ever been, because a codebase that’s hard to change prevents you from using AI effectively. Good codebases let AI do extraordinary work. Bad codebases make AI produce extraordinary messes.

This maps directly to what I observed in my “On the Loop” article [2]: when an agent reads a poorly named function, it builds confidently on a wrong assumption and spirals for iterations before discovering the name was misleading. The agent doesn’t know the code is bad. It trusts the names, the structure, the contracts. If those lie, the agent lies too.

To understand why, Pocock reaches for John Ousterhout’s A Philosophy of Software Design [4]. Ousterhout defines complexity as “anything related to the structure of a software system that makes it hard to understand and modify.” A bad codebase isn’t one with ugly formatting. It’s one that’s hard to change. And if you can’t change a codebase without causing bugs, AI can’t either.

Then he grabs The Pragmatic Programmer by Hunt and Thomas [5], which has a whole chapter on software entropy: the idea that every change to a codebase, if you’re only thinking about that change and not the design of the whole system, makes the codebase worse. This is exactly what the specs-to-code loop produces. Each regeneration adds entropy. The code decays.

Failure Mode 1: “The AI Didn’t Do What I Wanted”

You had a clear idea in your head. The AI built something completely different. Pocock’s diagnosis: you and the AI don’t share a design concept.

The term comes from Frederick P. Brooks’ The Design of Design [6]. Brooks argues that when two entities design something together, there’s an invisible, ephemeral idea floating between them: the design concept. It’s not a document. It’s not a markdown file. It’s the shared mental model of what you’re building. If you and the AI don’t share it, the output will miss.

Pocock’s solution is a skill called “grill-me”: a few lines of instruction that tell the AI to interview you relentlessly about every aspect of the plan until you reach a shared understanding. It walks down each branch of the “design tree” (another Brooks concept), resolving dependencies between decisions one by one. The AI might ask 40, 60, even 100 questions before it’s satisfied.

The skill repo has 24.8k stars on GitHub [7]. People clearly needed this.

The conversation that “grill-me” generates becomes the foundation for a product requirements document. Or, for smaller changes, it feeds directly into implementation tasks. Pocock’s view: this is better than default “plan mode” in tools like Claude Code, which is “extremely eager to create an asset” rather than reaching a shared understanding first.

Failure Mode 2: “The AI Is Too Verbose, and We’re Talking Past Each Other”

The AI uses too many words. You’re not speaking the same language. Every interaction has friction because terms mean different things to each of you.

Pocock’s fix comes from Domain-Driven Design (DDD), specifically Eric Evans’ concept of ubiquitous language [8]. In DDD, ubiquitous language is a shared vocabulary that developers, domain experts, and the codebase all use consistently. The same terms appear in conversations, in code, and in documentation. No translation layer. No ambiguity.

Evans introduced this in his 2003 book Domain-Driven Design: Tackling Complexity in the Heart of Software [8]. The core insight: most software bugs aren’t logic errors. They’re communication errors. When the code says “order” but the business means “reservation,” every conversation introduces drift.

Pocock applies this directly to AI: if you and the agent aren’t using the same terms for the same concepts, the agent’s thinking traces are verbose and its implementations drift from your intent. His skill scans the codebase, extracts terminology, and creates a markdown glossary. Both sides use it consistently.

The result: the AI thinks in a less verbose way, and the implementation aligns more closely with what was planned. Pocock calls it “unbelievably good.”

Failure Mode 3: “The AI Keeps Breaking Things”

The AI produces huge amounts of code in one go, then realizes it should probably test it. By then, the damage is done. The Pragmatic Programmer calls this “outrunning your headlights”: driving faster than your feedback loop allows [5].

Pocock’s fix: Test-Driven Development (TDD). Write the test first. Let the AI implement until the test passes. Refactor. Repeat.

TDD isn’t new. Kent Beck formalized it in Test-Driven Development: By Example [9] back in 2002. But Pocock argues it’s even more powerful with AI agents than with humans, because the agent can run the red-green-refactor loop much faster. The test becomes the specification. The agent becomes the implementation engine. The feedback loop tightens from minutes to seconds.

The catch: testing is hard. You need to decide how big a unit to test, what to mock, which behaviors matter. And all these decisions are interdependent. Which brings us to the next failure mode.

Failure Mode 4: “The Codebase Is Getting Worse Over Time”

Good codebases are easy to test. Bad codebases aren’t. And AI is really good at creating bad codebases: shallow modules, scattered dependencies, no clear boundaries.

Pocock goes back to Ousterhout’s A Philosophy of Software Design [4] for the concept of deep modules: relatively few, large modules with simple interfaces that hide complexity behind clean boundaries. The opposite, shallow modules, expose complex interfaces with little functionality behind them.

In a codebase full of shallow modules, the AI has to navigate a maze of tiny interconnected pieces. It doesn’t understand the dependencies. It changes one thing and breaks three others. In a codebase full of deep modules, the AI can work within clear boundaries. Test at the interface, verify at the interface.

Pocock has a skill for this too: “improve-codebase-architecture.” It systematically identifies related code scattered across shallow modules and wraps it into deep modules with clean interfaces. The result is a codebase that rewards TDD, because the boundaries are testable.

Failure Mode 5: “I Can’t Keep Up With What the AI Is Doing”

The AI is producing code inside modules faster than you can review it. You’re losing track of what’s happening.

Pocock’s fix: design the interface, delegate the implementation. As long as you have a testable boundary outside the module and understand its purpose, you can let the AI handle the internals. Test from the outside. Verify from the outside. Don’t inspect every line.

This is the “on the loop” pattern in miniature [2]: you design the constraints (the interface), the agent operates within them (the implementation). You don’t review every output. You verify the contract holds.

The key: you need to know your module map. Which modules exist, what their interfaces are, how they relate to each other. This needs to be part of your ubiquitous language and built into your planning. Pocock’s PRD skill is specific about module changes and interface modifications. He’s thinking about the architecture all the time.

And this is where Kent Beck’s principle lands: “Invest in the design of the system every day” [10]. The specs-to-code movement does the opposite. It divests from design. It treats the system as disposable. Pocock argues this is the core mistake.

The Strategic vs Tactical Split

Pocock’s closing frame ties it all together: think of AI as a great tactical programmer, a sergeant on the ground making code changes. You need someone above that, thinking at the strategic level. That’s you.

The strategic work is all fundamentals: design concepts (Brooks), complexity management (Ousterhout), shared language (Evans), feedback loops (Beck, Hunt & Thomas), and module architecture (Ousterhout again). None of these books were written for AI. All of them are more relevant because of AI.

This is the same split I described as the “Renaissance Developer” [11]: the developer who thrives in the age of AI isn’t the fastest typist, but the one who can think across disciplines and design the systems that agents execute within.

Where I’d Push Further

Pocock’s talk is focused on individual developer workflow. He doesn’t address the organizational dimension: what happens when teams of developers are all using AI agents with different levels of discipline? The entropy problem compounds. One developer’s clean module gets polluted by another developer’s AI-generated spaghetti.

This is where harness engineering [12] and spec-driven development become organizational concerns, not just individual practices. The harness isn’t just for your agent. It’s for your team’s agents.

I’d also push on the evaluation gap. Pocock’s TDD approach works brilliantly for functional correctness, but it doesn’t catch architectural drift. Your tests can all pass while your codebase slowly becomes unmaintainable. Property-based testing helps [2], but we still lack good tools for measuring codebase health over time in an agentic workflow.

The Reassuring Message

The most important thing Pocock said wasn’t technical. It was this: if you’ve spent years learning software engineering fundamentals, your skills are more valuable now, not less. The AI handles the tactical work. The strategic work (design, architecture, quality, naming, contracts) is still yours.

The books haven’t changed. The principles haven’t changed. The context has.

💬 Which software fundamental has become more important in your AI-assisted workflow? And which one have you been tempted to skip?

Sources:

[1] Matt Pocock — “Software Fundamentals Matter More Than Ever” (AI Engineer Summit, 2026): https://youtu.be/v4F1gFy-hqg

[2] My earlier article on code quality in the age of agents — “On the Loop, Not In It — But Code Quality Still Matters”: https://schristoph.online/blog/on-the-loop-code-quality/

[3] My earlier article on agentic coding risks — “Agentic Coding is a Slot Machine”: https://schristoph.online/blog/agentic-coding-slot-machine/

[4] John Ousterhout — A Philosophy of Software Design (2018): https://web.stanford.edu/~ouster/cgi-bin/aposd.php

[5] Andrew Hunt & David Thomas — The Pragmatic Programmer (1999, 20th Anniversary Edition 2019)

[6] Frederick P. Brooks Jr. — The Design of Design: Essays from a Computer Scientist (2010)

[7] Matt Pocock — Agent Skills repo (24.8k stars): https://github.com/mattpocock/skills

[8] Eric Evans — Domain-Driven Design: Tackling Complexity in the Heart of Software (2003)

[9] Kent Beck — Test-Driven Development: By Example (2002)

[10] Kent Beck — Extreme Programming Explained (1999, 2nd Edition 2004)

[11] My article on the changing developer role — “The Dawn of the Renaissance Developer”: https://schristoph.online/blog/the-dawn-of-the-renaissance-developer/

[12] Birgitta Böckeler — “Harness Engineering” (Thoughtworks): https://martinfowler.com/articles/exploring-gen-ai/harness-engineering.html